MINDWAR

The cognitive and information assault on the West. A symposium with Bianka Banova and Renée di Resta.

➡️ ‼️ IMPORTANT‼️

I know I said I’d have this reading list for you five days ago. But I completely forgot that this coming Sunday is Easter. When I realized this, I rescheduled everything, because I’m sure at least half the group won’t be free on Easter Sunday. So you have plenty of time for the reading, because we’ll be taking Easter Sunday off. Our symposium will convene on

SUNDAY, APRIL 12

that is to say,

NOT THIS COMING SUNDAY

And a blockbuster lineup awaits. …

Our 📌SUNDAY, APRIL 12 symposium will be devoted to the war for our minds.

Hostile states, and state-adjacent actors, are attacking our cognition. They are waging war on our social trust, our attention, the legitimacy of our political systems, our perception, our understanding of narrative and fact, and ultimately, our decision-making. And they’re winning.

On SUNDAY, APRIL 12, we’ll be discussing the cognitive and information onslaught against liberal democracies—the history and theory of cognitive warfare, the way our adversaries manipulate our information environment, why these efforts succeed, and whether there’s a way for open societies to defend themselves without becoming closed, censorious, and paranoid in the process.

I assume that many of you will already be familiar, perhaps even very familiar, with this topic. (If you’ve been reading the Cosmopolitan Globalist for a while, you certainly are.) So while I’m offering a fairly comprehensive reading list, you should feel free to skim anything that doesn’t tell you something you didn’t know.

We have two superb guests of honor on SUNDAY, APRIL 12—the best guests we could possibly have, actually. Bianka Banova writes the Waronomics Substack. From her “About” page:

We are in a period of geopolitical realignment that most Western analysis is not equipped to explain. The old frameworks—American primacy, liberal internationalism, the comforting fiction that economic integration prevents wars—have failed. The new frameworks haven’t arrived yet. Into that gap flows propaganda, wishful thinking, and analysis that tells comfortable audiences what they want to hear.

Waronomics was built to fill a different gap: the one between Eastern and Western understanding.

I’m Bianka—a Bulgarian-born, Switzerland-based analyst working in tech (by day) and analyzing the world we live in by night. I bring 20 years of multidisciplinary study across history, macroeconomics, defense, energy, trade, philosophy, and ideology, combined with the lived experience of growing up in the part of Europe that has always borne the costs of great power miscalculation.

I challenge Russian propaganda, Western complacency, and academic orthodoxies with equal enthusiasm. I read sources across multiple languages and worldviews. I have no institutional affiliations to protect and no ideology to serve.

Her field guide to Russian propaganda is the perfect introduction to this week’s reading list. (Be sure to watch the Bezmenov interview if you’ve never seen it; it’s a classic.)

… Throughout the Cold War, NATO obsessed over Soviet missiles, tank divisions, submarine patrols, and nuclear warheads. Reasonable enough—the arsenal was enormous. Intelligence agencies poured resources into tracking Russian military capabilities, mapping launch sites, running war games.

Meanwhile, the KGB allocated roughly 85 percent of its resources to something else entirely.

Not espionage. Not stealing secrets. Subversion.

Yuri Bezmenov, a KGB propaganda officer who defected in 1970 and gave a series of interviews throughout the 1980s, put it with the bluntness that only someone who had operated the machinery could manage: the objective was not to steal your classified documents. The objective was to change how an entire generation perceives reality—so gradually, so thoroughly, that even when confronted with authentic proof of manipulation, they would refuse to believe it. Not because the evidence was insufficient, but because their psychological framework for processing it had been fundamentally altered.

85 percent. Let that number sink in.

Now consider: Russia’s nuclear arsenal may or may not be fully operational. Maintenance is expensive, corruption in Russia is endemic, components degrade. Western analysts have spent decades debating the actual readiness of Russian strategic forces, and nobody outside the General Staff knows the real answer.

But the propaganda machine? The subversion apparatus? That weapon is maintained daily, funded generously, and staffed professionally. It is tested in live environments across dozens of countries simultaneously. And its track record is, by any honest intelligence assessment, spectacular.

Russia built a weapon that does not require a single warhead to function, cannot be intercepted by missile defence, leaves no radiation signature, and makes the target population defend the attack. If a defense contractor delivered that capability, they would win every procurement competition for the next century.

We should study it the way we study any masterwork of engineering—with the seriousness it deserves. So please, stop treating Russian propaganda and psyop campaigns as something that only the village idiots fall for, because there are people with PhDs in quantum physics and analytical minds that can make multiple millions on the stock market that have been affected by this sophisticated apparatus. This. Is. No. Joke.

Renée di Resta is the author of Agents of Influence on Substack. She’s a professor at Georgetown; formerly, she was the technical research manager at the Stanford Internet Observatory.

In 2018, at the behest of the bipartisan leadership of the Senate Select Committee on Intelligence, I led an investigation into the Russian Internet Research Agency’s multi-year effort to manipulate American society. I also presented public testimony. A year later, I led an additional investigation into influence capabilities that the GRU used alongside its hack-and-leak operations in the 2016 election.

I joined Stanford Internet Observatory in June of 2019, with the immediate goal of developing a deeper understanding of state actor disinformation campaigns as they played out all over the world. Russia’s military intelligence agency—the GRU—and mercenary organizations such as Wagner Group were an early focus, as was China’s growing outward-facing narrative manipulation capacity. I developed a model of full-spectrum propaganda, examining how state actors incorporated social media into their existing broadcast propaganda capacity, spanning the overt to covert spectrum. I’ve examined state actors spanning the globe for nearly a decade, including within the United States; my team produced a major report detecting some of the US Pentagon’s covert information operations, leading the Department of Defense to re-evaluate US government strategy. I’ve been a leader on major inter-institutional partnerships studying election rumors and disinformation, and served on the Lancet Commission in a multi-year project examining online drivers of vaccine hesitancy.

In this 2004 editorial for The New York Times, she explained why she’s no longer at the Stanford Internet Observatory:

In 2020 the Stanford Internet Observatory, where I was until recently the research director, helped lead a project that studied election rumors and disinformation. As part of that work, we frequently encountered conspiratorial thinking from Americans who had been told the 2020 presidential election was going to be stolen. … The belief that “the steal” was real led directly to the events of Jan. 6, 2021.

… Within a couple of years, the same online rumor mill turned its attention to us—the very researchers who documented it. … [I]t started with claims that our work was a plot to censor the right. The first came from a blog related to the Foundation for Freedom Online, the project of a man who said he “ran cyber” at the State Department. This person, an alt-right YouTube personality who’d gone by the handle Frame Game, had been employed by the State Department for just a couple of months.

Using his brief affiliation as a marker of authority, he wrote blog posts styled as research reports contending that our project, the Election Integrity Partnership, had pushed social media networks to censor 22 million tweets. He had no firsthand evidence of any censorship, however: his number was based on a simple tally of viral election rumors that we’d counted and published in a report after the election was over. Right-wing media outlets and influencers nonetheless called it evidence of a plot to steal the election, and their followers followed suit.

… After the House flipped to Republican control in 2022, the investigations began. The “22 million tweets” claim was entered into the congressional record by witnesses during a March 2023 hearing of a House Judiciary subcommittee. Two Republican members of the subcommittee, Jim Jordan and Dan Bishop, sent letters demanding our correspondence with the executive branch and with technology companies as part of an investigation into our role in a Biden “censorship regime.” … It was obvious to us what would happen next: The documents we turned over would be leaked and sentences cherry-picked to fit an existing narrative. This supposed evidence would be fodder for hyperpartisan influencers, and the process would begin again. Indeed, this is precisely what happened …

… The networks spreading misleading notions remain stronger than ever, and the networks of researchers and observers who worked to counter them are being dismantled. Universities and institutions have struggled to understand and adapt to lies about their work, often remaining silent and allowing false claims to ossify. Lies about academic projects are now matters of established fact within bespoke partisan realities. Costs, both financial and psychological, have mounted. Stanford is refocusing the work of the Observatory and has ended the Election Integrity Partnership’s rapid-response election observation work. Employees, including me, did not have their contracts renewed.

… The investigations have led to threats and sustained harassment for researchers who find themselves the focus of congressional attention. Misleading media claims have put students in the position of facing retribution for an academic research project. Even technology companies no longer appear to be acting together to disrupt election influence operations by foreign countries on their platforms.

Republican members of the House Judiciary subcommittee reacted to the Stanford news by saying their “robust oversight” over the center had resulted in a “big win” for free speech. This is an alarming statement for government officials to make about a private research institution with First Amendment rights.

Sam Harris spoke to her about this experience and about Russian influence operations in the US. Please listen to it—it’s a terrific introduction to the topic. (I used a gift link; you should be able to listen to the whole thing.)

She’s also the author of Invisible Rulers: The People Who Turn Lies into Reality. If you have the time to read it this week, it’s optional, but I highly recommend it. If you don’t have time to read it, here’s a review that summarizes her arguments, and there are many more on the Internet:

Invisible Rulers is so clear and free of jargon that university professors might consider using it as the core text for a seminar on the 21st century information crisis. …

She usefully structures her analysis—and ascribes responsibility for the social ills she describes—based on three categories: influencers, algorithms, and crowds. Influencers range from Trump and Musk to anti-vaxxer and erstwhile former independent presidential candidate Robert F. Kennedy, Jr.; Pizzagate and pro-MAGA provocateur Jack Posobiec; Amazing Polly, a QAnon thought leader who helped instigate online hysteria about a phony child trafficking plot involving the home furnishings company Wayfair; and Matt Taibbi, a formerly left-leaning journalist who made an unlikely transformation into an ally of right-wing politicians like Representative Jim Jordan (R-OH) in a McCarthyite “investigation” of DiResta and certain other misinformation researchers they falsely accused of conspiring with social media companies and the Biden administration to silence conservatives. Motivated by ideological goals, raw thirst for attention, clever monetization schemes, or some combination of the three, influencers share a talent for manipulating engagement-sensitive social media algorithms in a manner likely to get the software systems to amplify their content.

But critically, DiResta emphasizes, none of this would have impact without the crowds—the individuals who interact with and, above all, share influencers’ content when prompted to do so by various recommendation engines.

You could also watch one of the many interviews she’s done about the book. Just make sure that you’re familiar the arguments she makes.

The Concept

Cognitive Warfare, by François du Cluzel. You can skim this. (I believe this was originally written for NATO.) I’m suggesting this not because it’s sensational, but because it’s basic: I want to be sure we’re all on the same conceptual page. It lays out the now well-accepted argument that modern conflict growingly turns on the shaping of human perceptions and behaviors (as opposed to blowing things up).

“The existential threat from cyber-enabled information warfare,” by Herbert Lin. Lin wrote this in 2019, warning that “cyber-enabled information warfare has also become an existential threat in its own right, its increased use posing the possibility of a global information dystopia, in which the pillars of modern democratic self-government—logic, truth, and reality—are shattered, and anti-Enlightenment values undermine civilization as we know it around the world.

STUDY QUESTIONS:

How do you understand the term “cognitive warfare”? How analytically useful do you find the term? Does it clarify something genuinely new, or does it just describe the very old practice of propaganda, psychological warfare, and strategic deception in technologically-grandiose language?

At what point does the concept become so expansive that it ceases to discriminate among different kinds of influence?

Is Lin right to call this an existential threat?

Adversary Doctrine

Rivalry in the information sphere: Russian conceptions of information confrontation, by RAND. Be sure to read this one. The key argument: Russian strategic thought understands information war not as an occasional tactic, but as a standing condition of rivalry. This is a major conceptual difference from the way this kind of warfare is viewed in the West, which still tends to view it as an annoying add-on, not a continuous domain of competition.

Theory of reflexive control: Origins, evolution and application in the framework of contemporary Russian military strategy. Skim this if you have time. If you’re not sure that you understand what “reflexive control” means, make sure you read enough to understand it.

Chinese influence operations. This is extraordinarily thorough. After reading it, you’ll feel as a Panzer tank is barreling down on you and the only weapon you’ve got is a feather duster. Read the sections on the “Three Warfares,” and skim Chapter IX on “Information manipulations.” Also have a look at case study 6, “The Infektion 2.0 Operation during the Covid19 Pandemic.” If you’d like to read more about China’s doctrine, this is also very good, but not required: China’s military strategy in the new era; likewise, this article is a good guide to the Chinese literature on the topic: “The future of China’s cognitive warfare: Lessons from the war in Ukraine.”

Iran’s Information Warfare, by Itay Haiminis.

Cognitive combat: China, Russia, and Iran’s information war against Americans. This is optional, but a good summary.

STUDY QUESTIONS:

Is “information confrontation” best understood as a military doctrine, an intelligence practice, a political technology, or all three at once?

Does the Russian conception work because it is sophisticated, or because open societies offer absurdly permissive terrain?

Compare and contrast Russia’s and China’s approach to information warfare.

Find five obvious (but unmarked) specimens of Russian, Chinese, and Iranian information efforts in the wild, on social or traditional media. Bring them to the discussion and be prepared to explain how you recognized them.

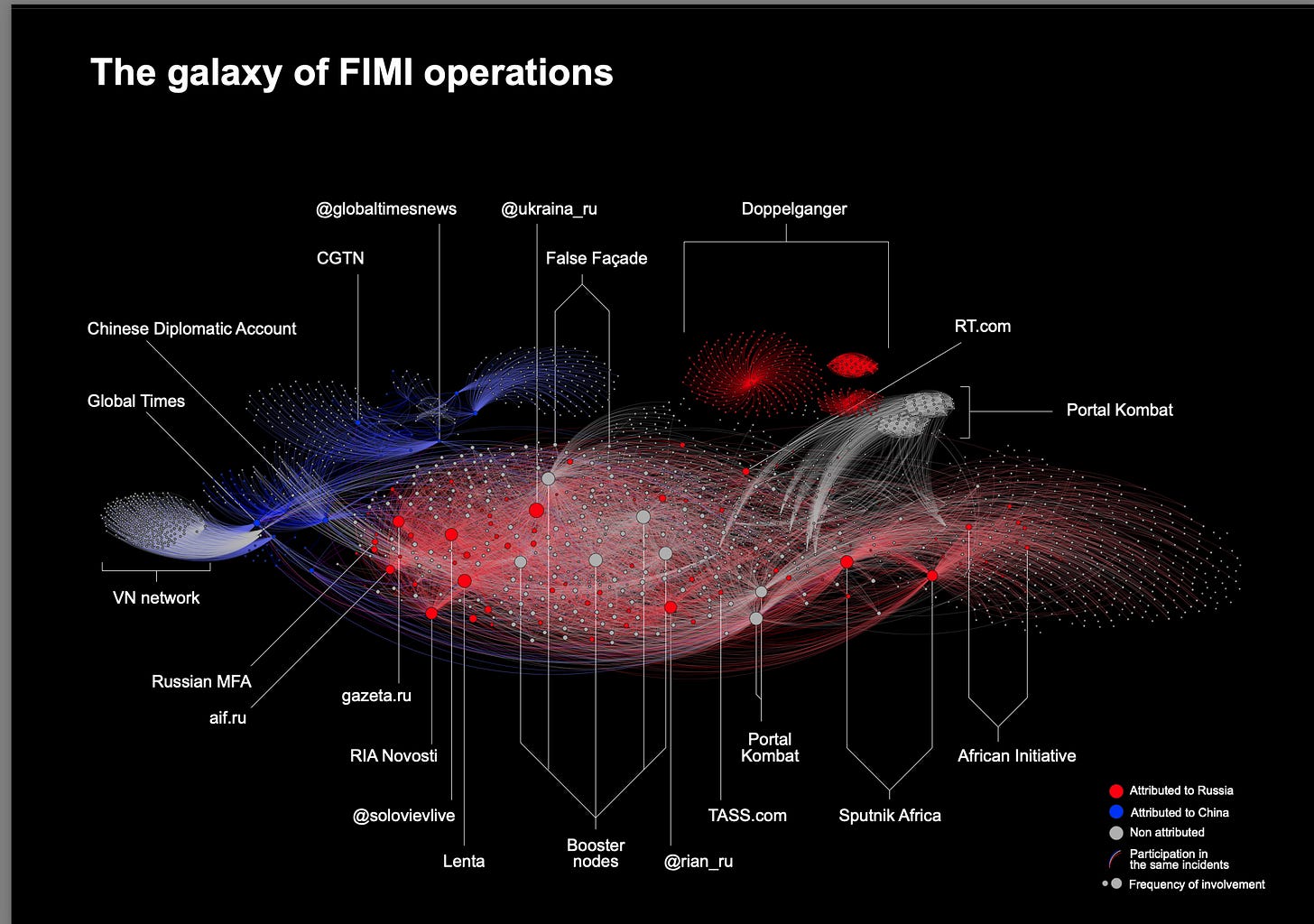

Current Operations

4th EEAS Annual Report on Foreign Information Manipulation and Interference Threats. This is probably the most useful institutional guide to concrete operations. The 2026 EEAS report uses the EU’s FIMI—Foreign Information Manipulation and Interference—framework; it now emphasizes deterrence as well as mapping. The associated reporting says the 2025 dataset documented 540 incidents involving roughly 10,500 channels and other assets, which is useful not because such figures are the whole story, but because they convey scale, infrastructure, and persistence. Feel free to skim.

3rd EEAS Report on FIMI Threats, methodology section (Chapter Two, pp. 15-19.) This explains how the EU distinguishes, or tries to distinguish, FIMI from ordinary political speech, routine bias, or domestic partisanship.

Adversarial Threat Report, first half of 2026, META. Skim Chapter Four.

From jihad to justice: Hamas’s outreach to the international arena

A Chinese influence campaign against Israel as a means of harming the United States

No need to read all of these; just read until you get the idea:

Iran targets US public opinion with online information war. The Iranian regime has been running an online influence campaign using AI-generated photographs, videos and memes to sow confusion about the war in the Middle East. The main goal is to ramp up anti-war sentiment in the US and pressure President Trump to end the conflict.

Iran’s strategic communications in the campaign: Intimidation, deterrence, and resilience

Iran social media strategy pivots to information war amid US-Israel attack

Iran’s information wafare during the December 2025-January 2026 protests and its continued influence on Israel and the West. (If you’re short of time, just read the abstract.)

STUDY QUESTIONS:

What, according to the EU, counts as foreign information manipulation rather than merely hostile speech? Are you satisfied with their framework?

According to the EU, are these campaigns centrally directed, opportunistically networked, or best understood as ecosystems?

How much does scale matter compared with timing, elite amplification, or the exploitation of pre-existing domestic cleavages?

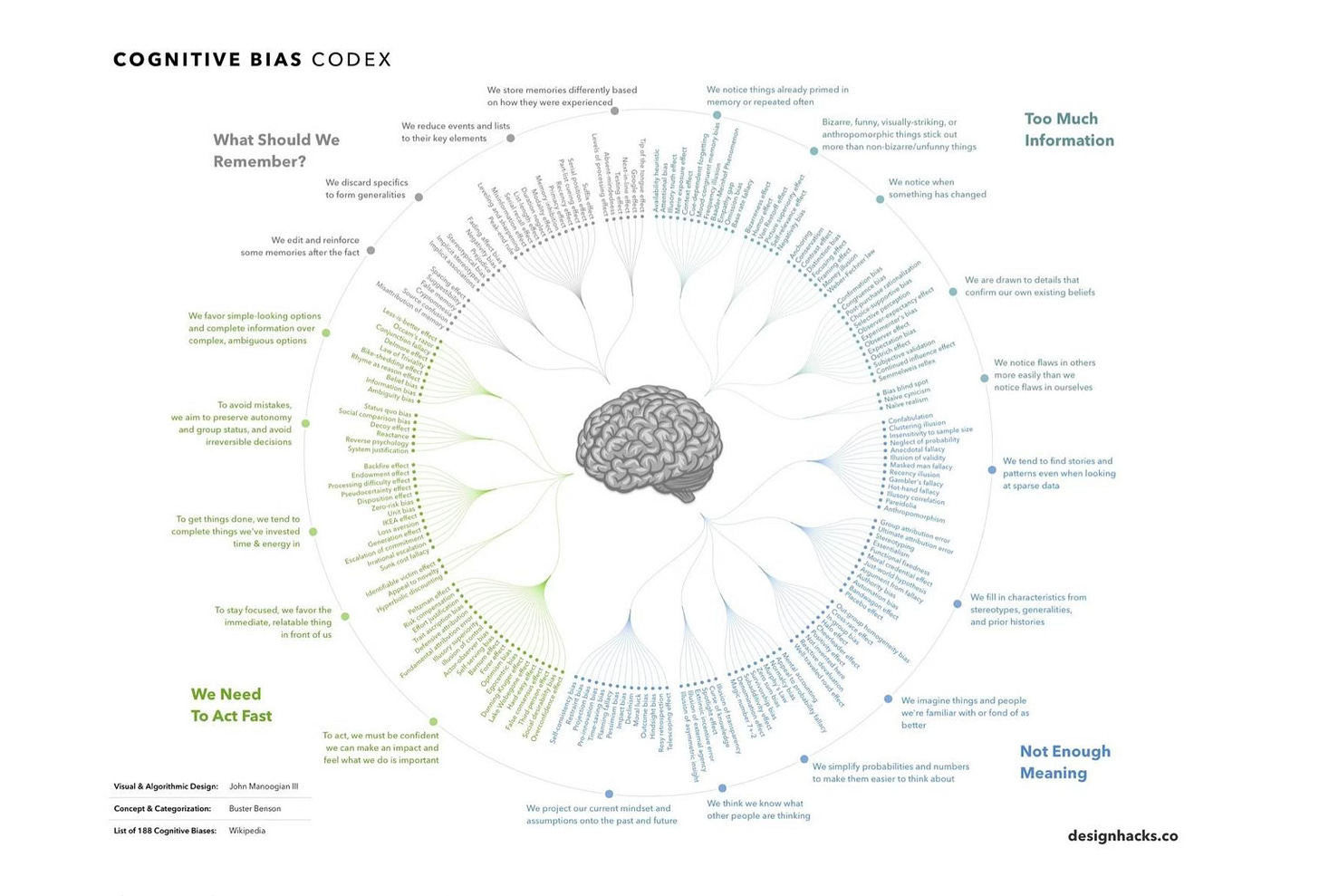

The Cognitive Substrate

Please read at least one of the following articles. Choose whichever one sounds most useful to you. The point of this is to focus, at least a bit, on the problem of human cognition—why people attend to, believe, share, defend, and internalize dubious claims. If we’re going to think about solutions, this side of it is important; disinformation isn’t just injected into passive populations. Read as much of the below as you need to get a sense of the features of cognition and identity that make certain kinds of manipulation effective.

The Psychology of Misinformation Across the Lifespan, by Sarah M. Edelson et al. A review of what makes people susceptible to misinformation, why they share it, and which interventions show promise.

Prebunking interventions based on “inoculation” theory can reduce susceptibility to misinformation across cultures, by Jon Roozenbeek et. al. This is short and directly tied to what can be done.

The psychological drivers of misinformation belief and its resistance to correction, by Ullrich K. H. Ecker et al. This is paywalled, but if you have access to it, it’s a denser review essay.

The complicated truth of countering disinformation. This is short and worthwhile.

If you feel like buying it, or if you’re able to find a PDF copy (I couldn’t), Jon Roozenbeek and Sander van der Linden also wrote a book about the features of cognition and identity that make manipulation effective. You can read quite a bit of The Psychology of Misinformation on Google Books.

STUDY QUESTIONS:

Are citizens chiefly victims, participants, or co-conspirators in misinformation ecologies?

Is the key variable false belief, or is it identity reinforcement and affective tribalism?

Do you find the language of “susceptibility” useful, or do you find it intolerably paternalistic?

Why the US is failing

HASC Subcommittee on Cyber, Innovative Technology and Information Systems, Testimony by Herbert Lin, who argues that the Department of Defense, is poorly authorized, structured, and equipped to cope with the information war threat. Please prioritize this in your reading.

How unmoderated platforms became the frontline for Russian propaganda, by Renée DiResta.

Remedies

The new governors: The people, rules, and processes governing online speech, by Kate Klonick. You won’t have time to read all of this, but it is the canonical article on regulating social media. Skim it, at least, and make sure you understand her fundamental argument—you might try asking ChatGPT to summarize it for you.

Private online platforms have an increasingly essential role in free speech and participation in democratic culture. But while it might appear that any internet user can publish freely and instantly online, many platforms actively curate the content posted by their users. How and why these platforms operate to moderate speech is largely opaque.

This Article provides the first analysis of what these platforms are actually doing to moderate online speech under a regulatory and First Amendment framework. Drawing from original interviews, archived materials, and internal documents, this Article describes how three major online platforms—Facebook, Twitter, and YouTube—moderate content and situates their moderation systems into a broader discussion of online governance and the evolution of free expression values in the private sphere. It reveals that private content-moderation systems curate user content with an eye to American free speech norms, corporate responsibility, and the economic necessity of creating an environment that reflects the expectations of their users. In order to accomplish this, platforms have developed a detailed system rooted in the American legal system with regularly revised rules, trained human decision making, and reliance on a system of external influence.

This article argues that to best understand online speech, we must abandon traditional doctrinal and regulatory analogies and understand these private content platforms as systems of governance. These platforms are now responsible for shaping and allowing participation in our new digital and democratic culture, yet they have little direct accountability to their users. Future intervention, if any, must take into account how and why these platforms regulate online speech in order to strike a balance between preserving the democratizing forces of the internet and protecting the generative power of our new governors.

Lawful but awful? Control over legal speech by platforms, governments, and internet users. A short guide to three possible approaches to governing legal speech.

Democratic defense against disinformation 2.0, by Alina Polyakova and Daniel Fried. You can skim this, but try to get a sense of what they recommend and why.

Democratic Offense Against Disinformation, by Alina Polyakova and Daniel Fried. Skim this, too. Focus especially on the section titled “Policy responses woefully lag.”

Countering Disinformation Effectively: An Evidence-Based Policy Guide, by Jon Bateman and Dean Jackson. An antidote to policy romanticism. Its central finding: There is no single intervention that is at once well studied, highly effective, and easy to scale.

Post-January 6th deplatforming reduced the reach of misinformation on Twitter, by McCabe et al. Deplatforming reduced circulation of misinformation among the deplatformed users and their followers. Something rare in this literature: evidence, not intuition. If you don’t have access to Nature, just read the abstract.

Free speech on the Internet: The crisis of epistemic authority. Prioritize this one, too.

This is very interesting, and if you don’t have time to watch it, at least skim the transcript on YouTube. It will help you to understand how social media companies used to address this problem, which makes it clear why all hell broke loose when, following Musk’s lead, they decided it wasn’t a problem that required addressing:

STUDY QUESTIONS:

Which is the graver danger in a liberal order: underreaction that leaves epistemic institutions defenceless, or overreaction that licenses censorship and bureaucratic overreach?

Does deplatforming work because it changes beliefs, because it changes distribution, or simply because it fractures networks of amplification?

Is it too late? Is the problem now so deep in the structure of the Internet that we’ll never be able to fix it?

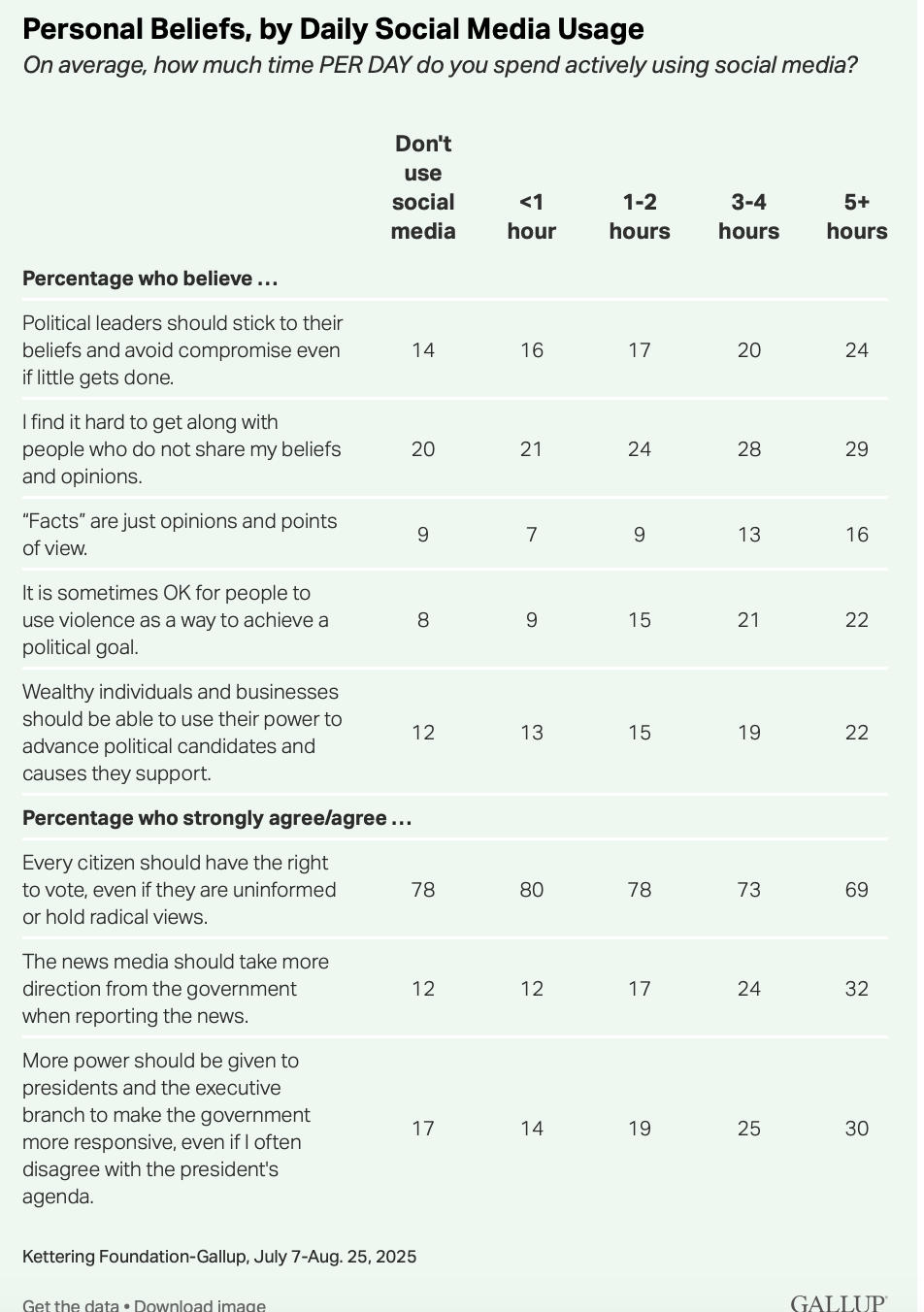

Miscellaneous

Social media use linked to mixed views on democracy. Hard to disambiguate causation and correlation here, but suggestive nonetheless:

PromptPasta: Stirring the pot of online discourse. How generative AI is being used and confused by inauthentic influence campaigns. The Trump Administration is now using bots domestically outside of an election cycle, once the exclusive purview authoritarian states. A bot network benefiting Trump, Elon Musk and Vivek Ramaswamy is running in a what is probably collusion with an entity affiliated with Musk or the Trump administration. It attacks their critics, praises Trump's policies and appointees, targets swing states, and criticizes Ukraine.

Transparency is censorship. The EU fined X for transparency violations. Guess what happened next.

Europe’s internet law is a Rorschach test. The facts, the fiction, and the political theater around the Digital Services Act.

Five key takeaways from the leaked Facebook documents. A trove of documents highlight the company’s anguish over its failure to attract teens and halt the spread of misinformation, hate speech and other harmful content. (If you’re curious, the documents are available here.)

If you have time, please watch this—it’s a good discussion of the difference between content moderation and censorship; whether the public square is still public; who has First Amendment rights on private platforms; why people don’t use “free speech absolutist” platforms; and the role of bots and AI in online speech.

Don’t worry if you can’t read all of this. Just make sure you read what I’ve marked as especially important. Skim the rest.

See you on …

SUNDAY, APRIL 12!

that is to say …